Report: Microsoft Australia DX hackfest (July)

An important part of being a Technical Evangelist at Microsoft is continuously upskilling and playing with different technologies. Taking 2 days out a month to sit down together and hack, gives us a chance to learn from each other. For example Simon briefly mentioned that he was playing with Xamarin Forms & Android development, but was having issues with the Intel Android emulators, so I was able to quickly show him the new Visual Studio ones that run on Hyper-V. Conversely I was having issues with NodeJS that Simon & Elaine were able to help me out with.

And of course, we took the time out for our usual #TacoTuesday

Like our previous hacks, the Melbourne team were hosted by Frank Arrigo out at the Telstra Innovation Labs /2017/05/25/report-microsoft-australia-dx-hackfest/. While we also had Azadeh joining in remotely from Sydney, and Hannes remotely from New Zealand.

David (Me) - Meme classifier

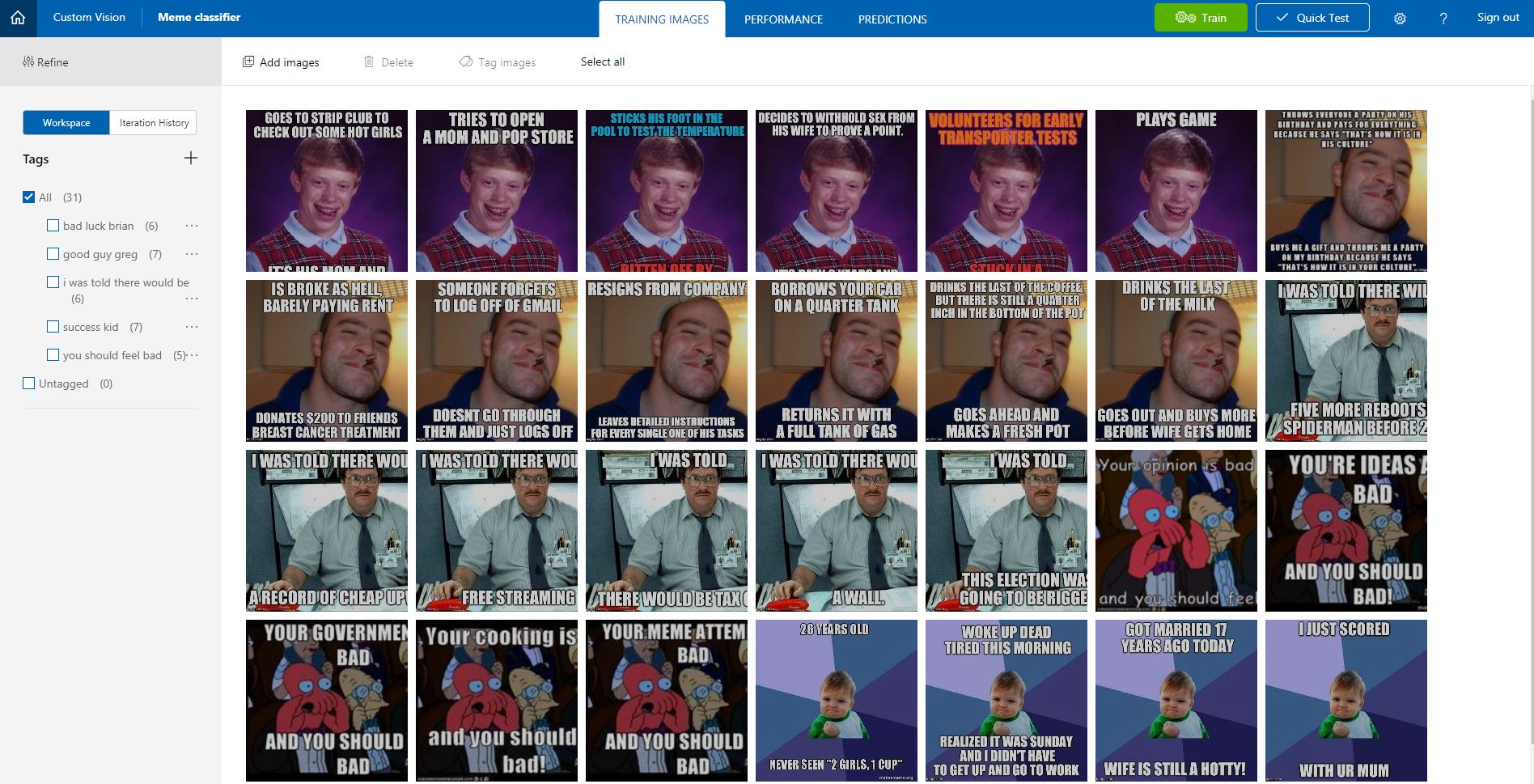

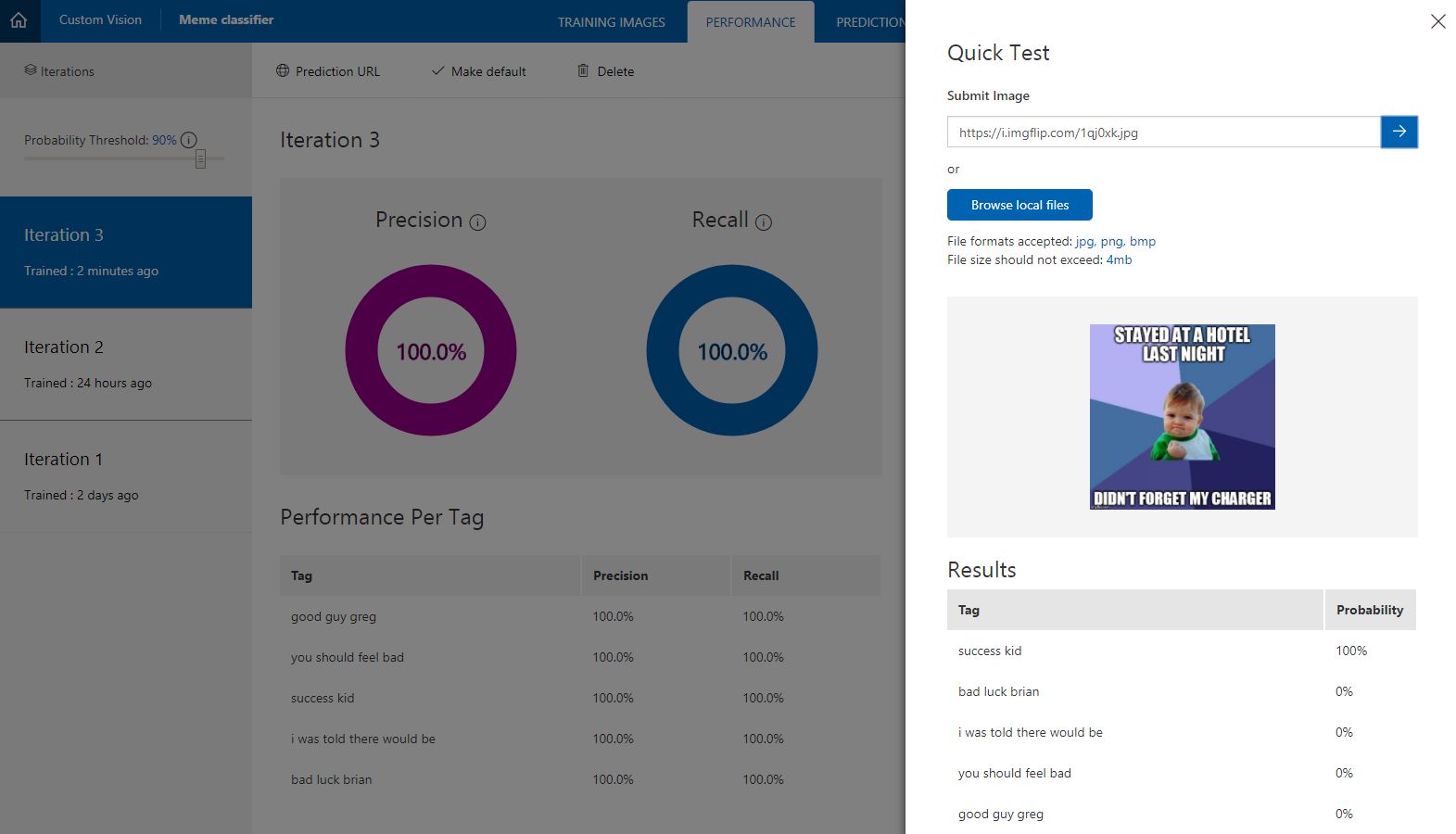

I decided to make a system that could automatically classify Internet Memes. There are whole subcultures on Reddit dedicated to them, one of my favourites being https://www.reddit.com/r/AdviceAnimals/. I wanted to use the new Custom Vision service https://CustomVision.ai/ to train it on the different meme types, and be able to upload a meme and be told which category it is.

Training the custom AI was easy, I uploaded samples that I got off Reddit and clicked train. Testing it with other images correctly identifies them. Creating and training only took 10 minutes, I spent way longer browsing Reddit looking at memes ^_^;;

Next I wanted to build a chat bot and allow people to upload an image, and have the AI return back the category, and send a link to the correct page on Know Your Meme e.g. Success Kid. I decided it would be a great time to try out the Microsoft Bot Framework for NodeJS. I have used NodeJS & npm to download and use Blockchain toolchains, but never developed directly on it.

I have enough time to fully build out the chat bot, but I learned HEAPS about using VS Code and debugging NodeJS apps using VS Code. Lots of little gotchas when developing with NodeJS for the first time.

Azadeh (remote from Sydney)

https://twitter.com/azadehkhojandi/status/886842072392622081

I wanted to solve the first world problem that most of us have! have I turned off my hair iron strengthener?

It turned out there are lots of people have the same problem, please read http://www.ismyhomesafe.ca/did-you-forget-to-turn-off-your-hair-straightener/ and https://www.honeywell.com/newsroom/news/2014/12/new-research-uncovers-fear-of-leaving-on-appliances-is-a-major-worry

to solve the problem I used wemo switch. I created two recipes/applets in ifttt for turning on and turning off the wemo switch. Basically, I got two endpoints for turning on and off the switch.

To make it more user-friendly and accessible, I used azure bot service and created a chat bot that can get commands to turn on and off the switch.

I used LUIS to understand intents and call the proper endpoint based on the command.

I hosted the source code on github and set continues integration to make sure after every push to master, the new code got deployed to azure bot service and updates the bot.

source code: https://github.com/Azadehkhojandi/WemoBot

Rian

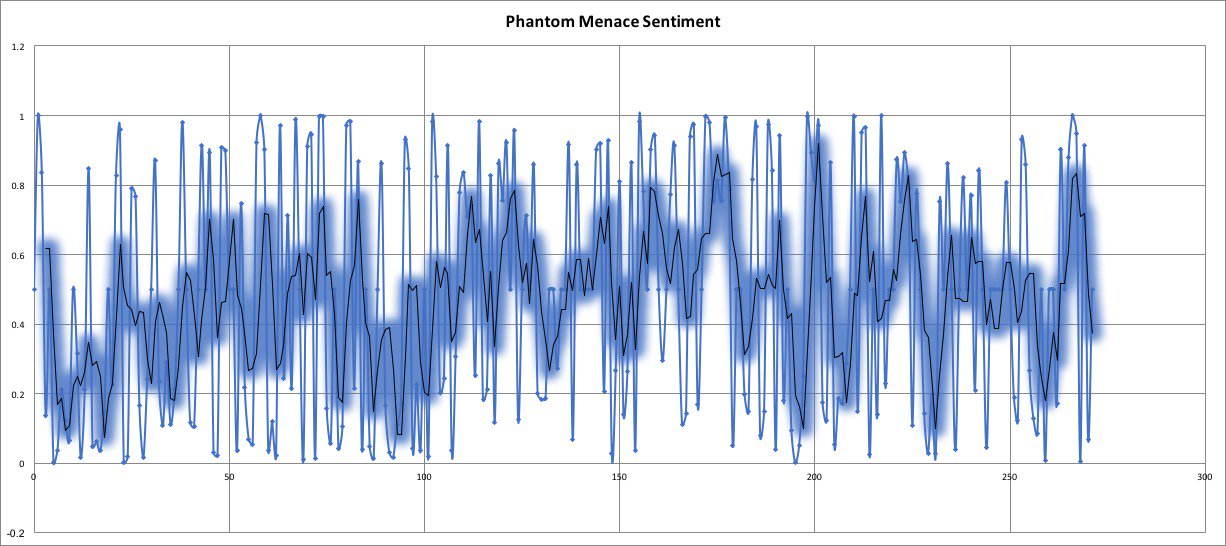

I used Azure Cognitive Services Text Analytics to analyse Star Wars subtitles tracks. Topic Detection and Sentiment Analysis both seemed like good candidates.

Key Learnings:

1) Topic Detection doesn't work well with many 'documents' of very small size (e.g. lines of subtitles), of as little as one word. A better approach was to approximate scenes and aggregate lines into larger documents.

2) Sentiment data is very noisy. A naive prediction is that such a sentiment analysis would track the cadence of the film. This is not at all the case, as you can see in the graph of the sentiment of the Phantom Menace.

3) Slang/ colloquialisms break topic detection, e.g. Jar Jar Binks' lines like 'mesa in trouble'. These should be excluded from the Topic Detection algorithm using Stop Words or Stop Phrases field in the request.

The plot below tracks sentiment across all pseudo-scenes throughout the film. You can see the data is highly variable and does not seem to follow the cadence of the film. A further research question might be to vary the size of pseudo-scenes (i.e. to aggregate lines into variable sized batches), and run sentiment analysis on all these pseudo-scenes. The result may better approximate the cadence of the film.

Hannes (remote from NZ)

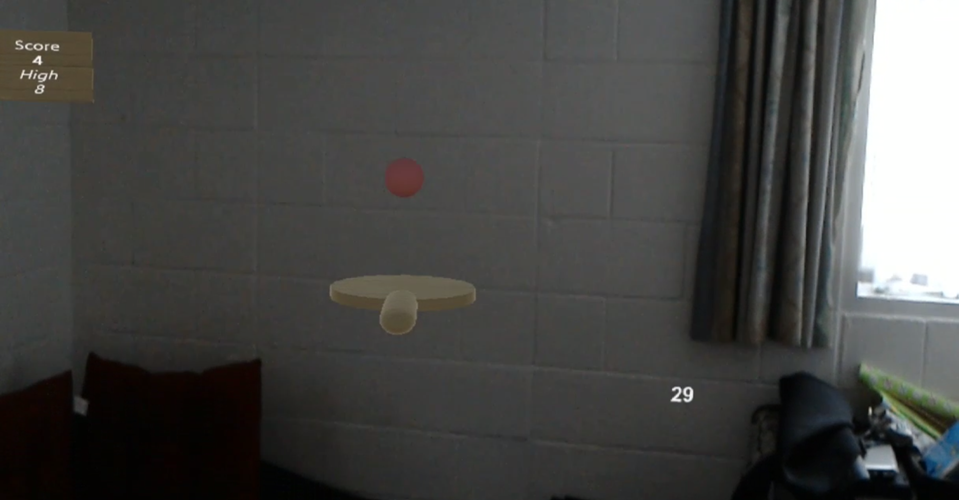

The app is made using Unity, and the HoloToolkit.

You can see how far along progress currently is in this video.

The idea is to bounce a table tennis ball on a paddle that you drag around with your hand. It has a scoreboard that tracks your high score for the session.

When you open the game, you are presented with a paddle and a ball hanging in the air above it. To start the game, you simply tap and hold on the paddle, which starts the ball falling. Keep the paddle under the ball to make it bounce. You get a point for every time the ball bounces on the paddle. Releasing the paddle resets the position of the ball.